Stem cells from a fetus can travel to the heart and regenerate the muscle, essentially saving a mother’s life.

Story by Big Think

In rare cases, a woman’s heart can start to fail in the months before or after giving birth. The all-important muscle weakens as its chambers enlarge, reducing the amount of blood pumped with each beat. Peripartum cardiomyopathy can threaten the lives of both mother and child. Viral illness, nutritional deficiency, the bodily stress of pregnancy, or an abnormal immune response could all play a role, but the causes aren’t concretely known.

If there is a silver lining to peripartum cardiomyopathy, it’s that it is perhaps the most survivable form of heart failure. A remarkable 50% of women recover spontaneously. And there’s an even more remarkable explanation for that glowing statistic: The fetus‘ stem cells migrate to the heart and regenerate the beleaguered muscle. In essence, the developing or recently born child saves its mother’s life.

Saving mama

While this process has not been observed directly in humans, it has been witnessed in mice. In a 2015 study, researchers tracked stem cells from fetal mice as they traveled to mothers’ damaged cardiac cells and integrated themselves into hearts.

Evolutionarily, this function makes sense: It is in the fetus’ best interest that its mother remains healthy.

Scientists also have spotted cells from the fetus within the hearts of human mothers, as well as countless other places inside the body, including the skin, spleen, liver, brain, lung, kidney, thyroid, lymph nodes, salivary glands, gallbladder, and intestine. These cells essentially get everywhere. While most are eliminated by the immune system during pregnancy, some can persist for an incredibly long time — up to three decades after childbirth.

This integration of the fetus’ cells into the mother’s body has been given a name: fetal microchimerism. The process appears to start between the fourth and sixth week of gestation in humans. Scientists are actively trying to suss out its purpose. Fetal stem cells, which can differentiate into all sorts of specialized cells, appear to target areas of injury. So their role in healing seems apparent. Evolutionarily, this function makes sense: It is in the fetus’ best interest that its mother remains healthy.

Sending cells into the mother’s body may also prime her immune system to grow more tolerant of the developing fetus. Successful pregnancy requires that the immune system not see the fetus as an interloper and thus dispatch cells to attack it.

Fetal microchimerism

But fetal microchimerism might not be entirely beneficial. Greater concentrations of the cells have been associated with various autoimmune diseases such as lupus, Sjogren’s syndrome, and even multiple sclerosis. After all, they are foreign cells living in the mother’s body, so it’s possible that they might trigger subtle, yet constant inflammation. Fetal cells also have been linked to cancer, although it isn’t clear whether they abet or hinder the disease.

A team of Spanish scientists summarized the apparent give and take of fetal microchimerism in a 2022 review article. “On the one hand, fetal microchimerism could be a source of progenitor cells with a beneficial effect on the mother’s health by intervening in tissue repair, angiogenesis, or neurogenesis. On the other hand, fetal microchimerism might have a detrimental function by activating the immune response and contributing to autoimmune diseases,” they wrote.

Regardless of a fetus’ cells net effect, their existence alone is intriguing. In a paper published earlier this year, University of London biologist Francisco Úbeda and University of Western Ontario mathematical biologist Geoff Wild noted that these cells might very well persist within mothers for life.

“Therefore, throughout their reproductive lives, mothers accumulate fetal cells from each of their past pregnancies including those resulting in miscarriages. Furthermore, mothers inherit, from their own mothers, a pool of cells contributed by all fetuses carried by their mothers, often referred to as grandmaternal microchimerism.”

So every mother may carry within her literal pieces of her ancestors.

How the body's immune resilience affects our health and lifespan

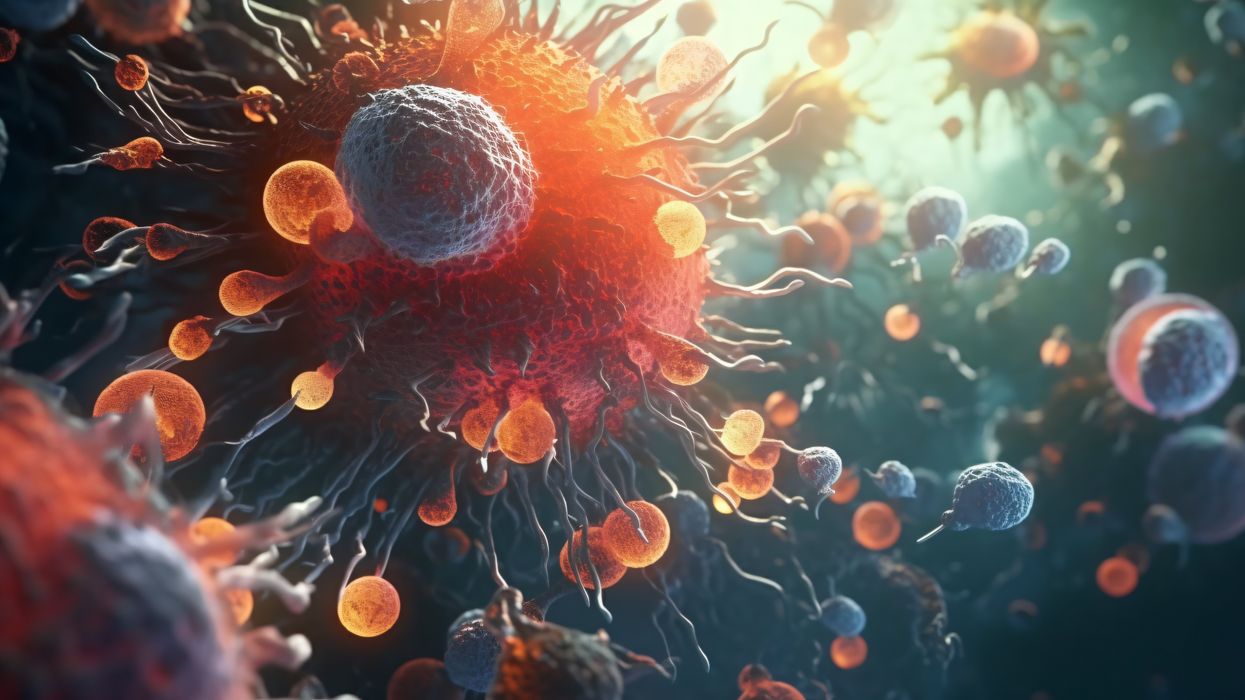

Immune cells battle an infection.

Story by Big Think

It is a mystery why humans manifest vast differences in lifespan, health, and susceptibility to infectious diseases. However, a team of international scientists has revealed that the capacity to resist or recover from infections and inflammation (a trait they call “immune resilience”) is one of the major contributors to these differences.

Immune resilience involves controlling inflammation and preserving or rapidly restoring immune activity at any age, explained Weijing He, a study co-author. He and his colleagues discovered that people with the highest level of immune resilience were more likely to live longer, resist infection and recurrence of skin cancer, and survive COVID and sepsis.

Measuring immune resilience

The researchers measured immune resilience in two ways. The first is based on the relative quantities of two types of immune cells, CD4+ T cells and CD8+ T cells. CD4+ T cells coordinate the immune system’s response to pathogens and are often used to measure immune health (with higher levels typically suggesting a stronger immune system). However, in 2021, the researchers found that a low level of CD8+ T cells (which are responsible for killing damaged or infected cells) is also an important indicator of immune health. In fact, patients with high levels of CD4+ T cells and low levels of CD8+ T cells during SARS-CoV-2 and HIV infection were the least likely to develop severe COVID and AIDS.

Individuals with optimal levels of immune resilience were more likely to live longer.

In the same 2021 study, the researchers identified a second measure of immune resilience that involves two gene expression signatures correlated with an infected person’s risk of death. One of the signatures was linked to a higher risk of death; it includes genes related to inflammation — an essential process for jumpstarting the immune system but one that can cause considerable damage if left unbridled. The other signature was linked to a greater chance of survival; it includes genes related to keeping inflammation in check. These genes help the immune system mount a balanced immune response during infection and taper down the response after the threat is gone. The researchers found that participants who expressed the optimal combination of genes lived longer.

Immune resilience and longevity

The researchers assessed levels of immune resilience in nearly 50,000 participants of different ages and with various types of challenges to their immune systems, including acute infections, chronic diseases, and cancers. Their evaluationdemonstrated that individuals with optimal levels of immune resilience were more likely to live longer, resist HIV and influenza infections, resist recurrence of skin cancer after kidney transplant, survive COVID infection, and survive sepsis.

However, a person’s immune resilience fluctuates all the time. Study participants who had optimal immune resilience before common symptomatic viral infections like a cold or the flu experienced a shift in their gene expression to poor immune resilience within 48 hours of symptom onset. As these people recovered from their infection, many gradually returned to the more favorable gene expression levels they had before. However, nearly 30% who once had optimal immune resilience did not fully regain that survival-associated profile by the end of the cold and flu season, even though they had recovered from their illness.

Intriguingly, some people who are 90+ years old still have optimal immune resilience, suggesting that these individuals’ immune systems have an exceptional capacity to control inflammation and rapidly restore proper immune balance.

This could suggest that the recovery phase varies among people and diseases. For example, young female sex workers who had many clients and did not use condoms — and thus were repeatedly exposed to sexually transmitted pathogens — had very low immune resilience. However, most of the sex workers who began reducing their exposure to sexually transmitted pathogens by using condoms and decreasing their number of sex partners experienced an improvement in immune resilience over the next 10 years.

Immune resilience and aging

The researchers found that the proportion of people with optimal immune resilience tended to be highest among the young and lowest among the elderly. The researchers suggest that, as people age, they are exposed to increasingly more health conditions (acute infections, chronic diseases, cancers, etc.) which challenge their immune systems to undergo a “respond-and-recover” cycle. During the response phase, CD8+ T cells and inflammatory gene expression increase, and during the recovery phase, they go back down.

However, over a lifetime of repeated challenges, the immune system is slower to recover, altering a person’s immune resilience. Intriguingly, some people who are 90+ years old still have optimal immune resilience, suggesting that these individuals’ immune systems have an exceptional capacity to control inflammation and rapidly restore proper immune balance despite the many respond-and-recover cycles that their immune systems have faced.

Public health ramifications could be significant. Immune cell and gene expression profile assessments are relatively simple to conduct, and being able to determine a person’s immune resilience can help identify whether someone is at greater risk for developing diseases, how they will respond to treatment, and whether, as well as to what extent, they will recover.

AI was integral to creating Moderna's mRNA vaccine against COVID.

Story by Big Think

For most of history, artificial intelligence (AI) has been relegated almost entirely to the realm of science fiction. Then, in late 2022, it burst into reality — seemingly out of nowhere — with the popular launch of ChatGPT, the generative AI chatbot that solves tricky problems, designs rockets, has deep conversations with users, and even aces the Bar exam.

But the truth is that before ChatGPT nabbed the public’s attention, AI was already here, and it was doing more important things than writing essays for lazy college students. Case in point: It was key to saving the lives of tens of millions of people.

AI-designed mRNA vaccines

As Dave Johnson, chief data and AI officer at Moderna, told MIT Technology Review‘s In Machines We Trust podcast in 2022, AI was integral to creating the company’s highly effective mRNA vaccine against COVID. Moderna and Pfizer/BioNTech’s mRNA vaccines collectively saved between 15 and 20 million lives, according to one estimate from 2022.

Johnson described how AI was hard at work at Moderna, well before COVID arose to infect billions. The pharmaceutical company focuses on finding mRNA therapies to fight off infectious disease, treat cancer, or thwart genetic illness, among other medical applications. Messenger RNA molecules are essentially molecular instructions for cells that tell them how to create specific proteins, which do everything from fighting infection, to catalyzing reactions, to relaying cellular messages.

Johnson and his team put AI and automated robots to work making lots of different mRNAs for scientists to experiment with. Moderna quickly went from making about 30 per month to more than one thousand. They then created AI algorithms to optimize mRNA to maximize protein production in the body — more bang for the biological buck.

For Johnson and his team’s next trick, they used AI to automate science, itself. Once Moderna’s scientists have an mRNA to experiment with, they do pre-clinical tests in the lab. They then pore over reams of data to see which mRNAs could progress to the next stage: animal trials. This process is long, repetitive, and soul-sucking — ill-suited to a creative scientist but great for a mindless AI algorithm. With scientists’ input, models were made to automate this tedious process.

“We don’t think about AI in the context of replacing humans,” says Dave Johnson, chief data and AI officer at Moderna. “We always think about it in terms of this human-machine collaboration, because they’re good at different things. Humans are really good at creativity and flexibility and insight, whereas machines are really good at precision and giving the exact same result every single time and doing it at scale and speed.”

All these AI systems were in put in place over the past decade. Then COVID showed up. So when the genome sequence of the coronavirus was made public in January 2020, Moderna was off to the races pumping out and testing mRNAs that would tell cells how to manufacture the coronavirus’s spike protein so that the body’s immune system would recognize and destroy it. Within 42 days, the company had an mRNA vaccine ready to be tested in humans. It eventually went into hundreds of millions of arms.

Biotech harnesses the power of AI

Moderna is now turning its attention to other ailments that could be solved with mRNA, and the company is continuing to lean on AI. Scientists are still coming to Johnson with automation requests, which he happily obliges.

“We don’t think about AI in the context of replacing humans,” he told the Me, Myself, and AI podcast. “We always think about it in terms of this human-machine collaboration, because they’re good at different things. Humans are really good at creativity and flexibility and insight, whereas machines are really good at precision and giving the exact same result every single time and doing it at scale and speed.”

Moderna, which was founded as a “digital biotech,” is undoubtedly the poster child of AI use in mRNA vaccines. Moderna recently signed a deal with IBM to use the company’s quantum computers as well as its proprietary generative AI, MoLFormer.

Moderna’s success is encouraging other companies to follow its example. In January, BioNTech, which partnered with Pfizer to make the other highly effective mRNA vaccine against COVID, acquired the company InstaDeep for $440 million to implement its machine learning AI across its mRNA medicine platform. And in May, Chinese technology giant Baidu announced an AI tool that designs super-optimized mRNA sequences in minutes. A nearly countless number of mRNA molecules can code for the same protein, but some are more stable and result in the production of more proteins. Baidu’s AI, called “LinearDesign,” finds these mRNAs. The company licensed the tool to French pharmaceutical company Sanofi.

Writing in the journal Accounts of Chemical Research in late 2021, Sebastian M. Castillo-Hair and Georg Seelig, computer engineers who focus on synthetic biology at the University of Washington, forecast that AI machine learning models will further accelerate the biotechnology research process, putting mRNA medicine into overdrive to the benefit of all.

This article originally appeared on Big Think, home of the brightest minds and biggest ideas of all time.