Why Don’t We Have Artificial Wombs for Premature Infants?

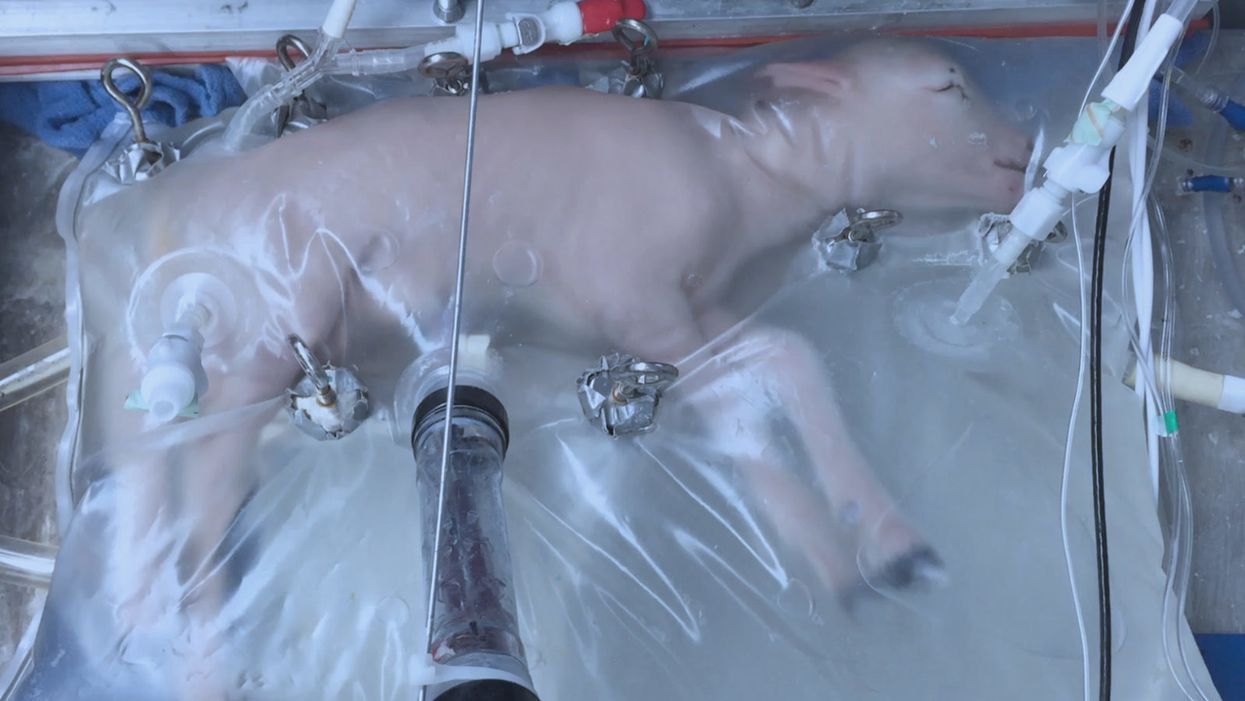

A lamb which was prematurely born at the equivalent of 23 weeks' human gestation, after 28 days of support from an artificial womb.

Ectogenesis, the development of a baby outside of the mother's body, is a concept that dates back to 1923. That year, British biochemist-geneticist J.B.S. Haldane gave a lecture to the "Heretics Society" of the University of Cambridge in which he predicted the invention of an artificial womb by 1960, leading to 70 percent of newborns being born that way by the 2070s. In reality, that's about when an artificial womb could be clinically operational, but trends in science and medicine suggest that such technology would come in increments, each fraught with ethical and social challenges.

An extra-uterine support device could be ready for clinical trials in humans in the next two to four years, with hopes that it could improve survival of very premature infants.

Currently, one major step is in the works, a system called an extra-uterine support device (EUSD) –or sometimes Ex-Vivo uterine Environment (EVE)– which researchers at the Children's Hospital of Philadelphia have been using to support fetal lambs outside the mother. It also has been called an artificial placenta, because it supplies nutrient- and oxygen-rich blood to the developing lambs via the umbilical vein and receives blood full of waste products through the umbilical arteries. It does not do everything that a natural placenta does, yet it does do some things that a placenta doesn't do. It breathes for the fetus like the mother's lungs, and encloses the fetus in sterile fluid, just like the amniotic sac. It represents a solution to one set of technical challenges in the path to an artificial womb, namely how to keep oxygen flowing into a fetus and carbon dioxide flowing out when the fetal lungs are not ready to function.

Capable of supporting fetal lambs physiologically equivalent to a human fetus at 23 weeks' gestation or earlier, the EUSD could be ready for clinical trials in humans in the next two to four years, with hopes that it could improve survival of very premature infants. Existing medical technology can keep human infants alive when born in this 23-week range, or even slightly less —the record is just below 22 weeks. But survival is low, because most of the treatment is directed at the lungs, the last major body system to mature to a functional status. This leads to complications not only in babies born before 24 weeks' gestation, but also in a fairly large number of births up to 28 weeks' gestation.

So, the EUSD is basically an advanced neonatal life support machine that beckons to square off the survival curve for infants born up to the 28th week. That is no doubt a good thing, but given the political prominence of reproductive issues, might any societal obstacles be looming?

"While some may argue that the EUSD system will shift the definition of viability to a point prior to the maturation of the fetus' lungs, ethical and legal frameworks must still recognize the mother's privacy rights as paramount."

Health care attorney and clinical ethicist David N. Hoffman points out that even though the EUSD may shift the concept of fetal viability away from the maturity of developing lungs, it would not change the current relationship of the fetus to the mother during pregnancy.

"Our social and legal frameworks, including Roe v. Wade, invite the view of the embryo-fetus as resembling a parasite. Not in a negative sense, but functionally, since it obtains its life support from the mother, while she does not need the fetus for her own physical health," notes Hoffman, who holds faculty appointments at Columbia University, and at the Benjamin N. Cardozo School of Law and the Albert Einstein College of Medicine, of Yeshiva University. "In contrast, our ethical conception of the relationship is grounded in the nurturing responsibility of parenthood. We prioritize the welfare of both mother and fetus ethically, but we lean toward the side of the mother's legal rights, regarding her health throughout pregnancy, and her right to control her womb for most of pregnancy. While some may argue that the EUSD system will shift the definition of viability to a point prior to the maturation of the fetus' lungs, ethical and legal frameworks must still recognize the mother's privacy rights as paramount, on the basis of traditional notions of personhood and parenthood."

Outside of legal frameworks, religion, of course, is a major factor in how society reacts to new reproductive technologies, and an artificial womb would trigger a spectrum of responses.

"Significant numbers of conservative Christians may oppose an artificial womb in fear that it might harm the central role of marriage in Christianity."

Speaking from the perspective of Lutheran scholarship, Dr. Daniel Deen, Assistant Professor of Philosophy at Concordia University in Irvine, Calif., does not foresee any objections to the EUSD, either theologically, or generally from Lutherans (who tend to be conservative on reproductive issues), since the EUSD is basically an improvement on current management of prematurity. But things would change with the advent of a full-blown artificial womb.

"Significant numbers of conservative Christians may oppose an artificial womb in fear that it might harm the central role of marriage in Christianity," says Deen, who specializes in the philosophy of science. "They may see the artificial womb as a catalyst for strengthening the mechanistic view of reproduction that dominates the thinking of secular society, and of other religious groups, including more liberal Christians."

Judaism, however, appears to be more receptive, even during the research phases.

"Even if researchers strive for a next-generation EUSD aimed at supporting a fetus several weeks earlier than possible with the current system, it still keeps the fetus inside the mother well beyond the 40-day threshold, so there likely are no concerns in terms of Jewish law," says Kalman Laufer, a rabbinical student and executive director of the Medical Ethics Society at Yeshiva University. Referring to a concept from the Babylonian Talmud that an embryo is "like water" until 40 days into pregnancy, at which time it receives a kind of almost-human status warranting protection, Laufer cautions that he's speaking about artificial wombs developed for the sake of rescuing very premature infants. At the same time though, he expects that artificial womb research will eventually trigger a series of complex, legalistic opinions from Jewish scholars, as biotechnology moves further toward supporting fetal growth entirely outside a woman's body.

"Since [the EUSD] gives some justification to end abortion, by transferring fetuses from mother to machine, conservatives will probably rally around it."

While the technology treads into uncomfortable territory for social conservatives at first glance, it's possible that the prospect of taking the abortion debate in a whole new direction could engender support for the artificial womb. "Since [the EUSD] gives some justification to end abortion, by transferring fetuses from mother to machine, conservatives will probably rally around it," says Zoltan Istvan, a transhumanist politician and journalist who ran for U.S. president in 2016. To some extent, Deen agrees with Istvan, provided we get to a point when the artificial womb is already a reality.

"The world has a way of moving forward despite the fear of its inhabitants," Deen notes. "If the technology gets developed, I could not see any Christians, liberal or conservative, arguing that people seeking abortion ought not opt for a 'transfer' versus an abortive procedure."

So then how realistic is a full-blown artificial womb? The researchers at the Children's Hospital of Philadelphia have noted various technical difficulties that would come up in any attempt to connect a very young fetus to the EUSD and maintain life. One issue is the small umbilical cord blood vessels that must be connected to the EUSD as fetuses of decreasing gestational age are moved outside the mother. Current procedures might be barely adequate for integrating a human fetus into the device in the 18 -21 week range, but going to lower gestational ages would require new technology and different strategies. It also would require numerous other factors to cover for fetal body systems that mature ahead of the lungs and that the current EUSD system is not designed to replace. However, biotechnology and tissue engineering strategies on the horizon could be added to later EUSDs. To address the blood vessel size issue, artificial womb research could benefit by drawing on experts in microfluidics, the field concerned with manipulation of tiny amounts of fluid through very small spaces, and which is ushering in biotech innovations like the "lab on a chip".

"The artificial womb might put fathers on equal footing with mothers, since any embryo could potentially achieve personhood without ever seeing the inside of a woman's uterus."

If the technical challenges to an artificial womb are indeed overcome, reproductive policy debates could be turned on their side.

"Evolution of the EUSD into a full-blown artificial external uterus has ramifications for any reproductive rights issues where policy currently assumes that a mother is needed for a fertilized egg to become a person," says Hoffman, the ethicist and legal scholar. "If we consider debates over whether to keep cryopreserved human embryos in storage, destroy them, or utilize them for embryonic stem cell research or therapies, the artificial womb might put fathers on equal footing with mothers, since any embryo could potentially achieve personhood without ever seeing the inside of a woman's uterus."

Such a scenario, of course, depends on today's developments not being curtailed or sidetracked by societal objections before full-blown ectogenesis is feasible. But if this does ever become a reality, the history of other biotechnologies suggests that some segment of society will embrace the new innovation and never look back.

A woman receives a mammogram, which can detect the presence of tumors in a patient's breast.

When a patient is diagnosed with early-stage breast cancer, having surgery to remove the tumor is considered the standard of care. But what happens when a patient can’t have surgery?

Whether it’s due to high blood pressure, advanced age, heart issues, or other reasons, some breast cancer patients don’t qualify for a lumpectomy—one of the most common treatment options for early-stage breast cancer. A lumpectomy surgically removes the tumor while keeping the patient’s breast intact, while a mastectomy removes the entire breast and nearby lymph nodes.

Fortunately, a new technique called cryoablation is now available for breast cancer patients who either aren’t candidates for surgery or don’t feel comfortable undergoing a surgical procedure. With cryoablation, doctors use an ultrasound or CT scan to locate any tumors inside the patient’s breast. They then insert small, needle-like probes into the patient's breast which create an “ice ball” that surrounds the tumor and kills the cancer cells.

Cryoablation has been used for decades to treat cancers of the kidneys and liver—but only in the past few years have doctors been able to use the procedure to treat breast cancer patients. And while clinical trials have shown that cryoablation works for tumors smaller than 1.5 centimeters, a recent clinical trial at Memorial Sloan Kettering Cancer Center in New York has shown that it can work for larger tumors, too.

In this study, doctors performed cryoablation on patients whose tumors were, on average, 2.5 centimeters. The cryoablation procedure lasted for about 30 minutes, and patients were able to go home on the same day following treatment. Doctors then followed up with the patients after 16 months. In the follow-up, doctors found the recurrence rate for tumors after using cryoablation was only 10 percent.

For patients who don’t qualify for surgery, radiation and hormonal therapy is typically used to treat tumors. However, said Yolanda Brice, M.D., an interventional radiologist at Memorial Sloan Kettering Cancer Center, “when treated with only radiation and hormonal therapy, the tumors will eventually return.” Cryotherapy, Brice said, could be a more effective way to treat cancer for patients who can’t have surgery.

“The fact that we only saw a 10 percent recurrence rate in our study is incredibly promising,” she said.

Urinary tract infections account for more than 8 million trips to the doctor each year.

Few things are more painful than a urinary tract infection (UTI). Common in men and women, these infections account for more than 8 million trips to the doctor each year and can cause an array of uncomfortable symptoms, from a burning feeling during urination to fever, vomiting, and chills. For an unlucky few, UTIs can be chronic—meaning that, despite treatment, they just keep coming back.

But new research, presented at the European Association of Urology (EAU) Congress in Paris this week, brings some hope to people who suffer from UTIs.

Clinicians from the Royal Berkshire Hospital presented the results of a long-term, nine-year clinical trial where 89 men and women who suffered from recurrent UTIs were given an oral vaccine called MV140, designed to prevent the infections. Every day for three months, the participants were given two sprays of the vaccine (flavored to taste like pineapple) and then followed over the course of nine years. Clinicians analyzed medical records and asked the study participants about symptoms to check whether any experienced UTIs or had any adverse reactions from taking the vaccine.

The results showed that across nine years, 48 of the participants (about 54%) remained completely infection-free. On average, the study participants remained infection free for 54.7 months—four and a half years.

“While we need to be pragmatic, this vaccine is a potential breakthrough in preventing UTIs and could offer a safe and effective alternative to conventional treatments,” said Gernot Bonita, Professor of Urology at the Alta Bro Medical Centre for Urology in Switzerland, who is also the EAU Chairman of Guidelines on Urological Infections.

The news comes as a relief not only for people who suffer chronic UTIs, but also to doctors who have seen an uptick in antibiotic-resistant UTIs in the past several years. Because UTIs usually require antibiotics, patients run the risk of developing a resistance to the antibiotics, making infections more difficult to treat. A preventative vaccine could mean less infections, less antibiotics, and less drug resistance overall.

“Many of our participants told us that having the vaccine restored their quality of life,” said Dr. Bob Yang, Consultant Urologist at the Royal Berkshire NHS Foundation Trust, who helped lead the research. “While we’re yet to look at the effect of this vaccine in different patient groups, this follow-up data suggests it could be a game-changer for UTI prevention if it’s offered widely, reducing the need for antibiotic treatments.”