Would You Want to Know a Decade Early If You Were Getting Alzheimer's?

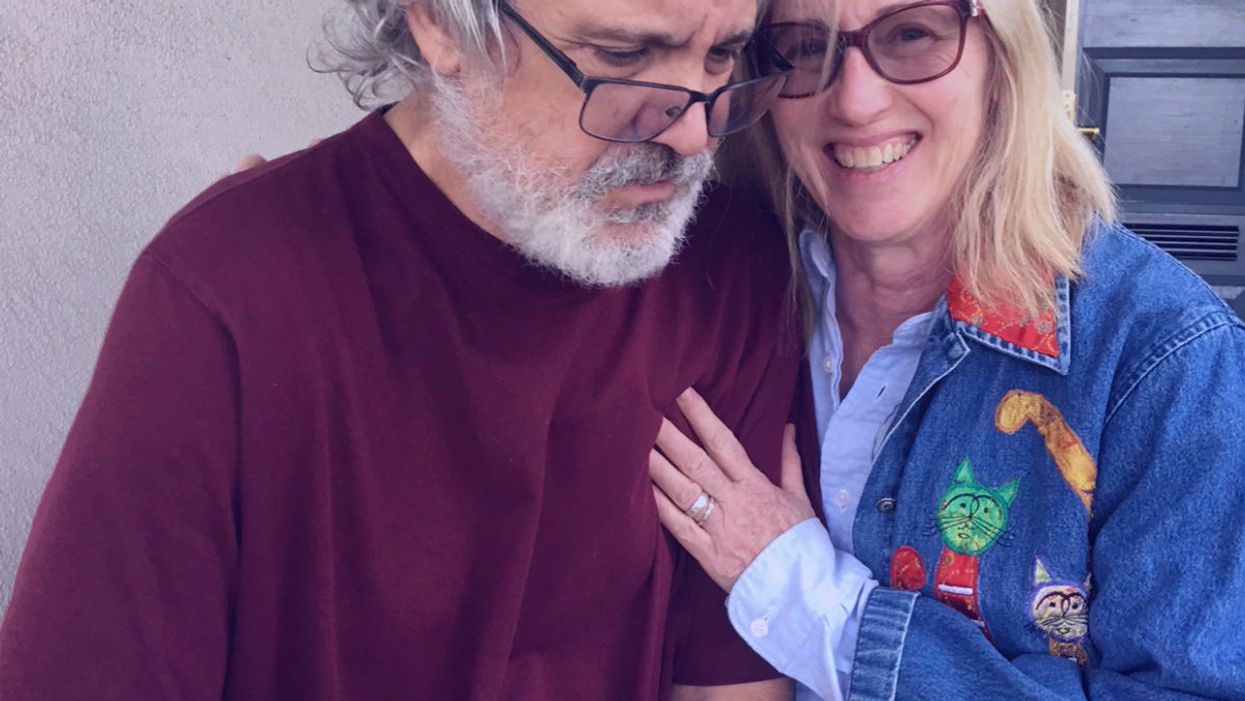

The author pictured with her husband Dallas, who has Alzheimer's.

Editor's Note: A team of researchers in Italy recently used artificial intelligence and machine learning to diagnose Alzheimer's disease on a brain scan an entire decade before symptoms show up in the patient. While some people argue that early detection is critical, others believe the knowledge would do more harm than good. LeapsMag invited contributors with opposite opinions to share their perspectives.

I first realized something was wrong with my dad when I came home for Thanksgiving 20 years ago.

I hadn't seen my family for more than a year after moving from New York to California. My father was meticulous, a multi-shower a day man, a regular Beau Brummell. He was never officially diagnosed with dementia, but it was easy to figure out after he stopped leaving the house, stopped reading, stopped being himself. My mother knew, but she never sought help. After his illness showed itself, I asked her if she considered a nursing home. "Never," she told me. "I can take care of him." And she did.

She gave herself a break once to visit me, and it was the first time she traveled separately from him since they eloped at seventeen. My brother watched my father, and it was not smooth. Dad was angry, hallucinating, and demanding his gun, which had been disposed of long ago. While Mom was visiting me in California, we played some board games. One demanded honest answers. The card read, What are you most afraid of? "Dementia," she said.

My father never saw this coming, none of us did.

Dementia ran on my mother's side. Her mother, my Nana, was senile, the popular diagnosis for older folks back then. My grandfather tried his hardest to take care of her, but she kept escaping their tidy 6th floor apartment to run away. My mother would go over every day to take care of them, but once my grandfather became ill, she took her mother into our apartment. She had two small children, Nana, and her husband in a two-bedroom flat. Nana talked to people under plates, wore tissues on her head, and tried to escape. We were on the first floor, so she could run into traffic if all eyes weren't on her. Soon, it was too much, even for my Wonder Woman mom. Nana was placed in a nursing home and died soon after.

My mother dropped dead on a NYC sidewalk two years after my father started to deteriorate. She was probably going to the store to buy milk and cigarettes. A kind stranger called 911, and a cop came to my parent's door soon after to tell my dad the news. My father cried for death, raged and ranted, then calmed down enough to come back as the dad we remembered for the week of mourning. He even ordered a Manhattan at dinner. His death came exactly a week and an hour after my mother's. He died of a broken heart. My husband cried with all his body after we left the cemetery, weeping, "Poor Buck. Poor Buck." I never saw him cry before.

Now, 18 years later, I sit here with my husband, 59 years old, as he suffers from the same hideous disease.

He is talking to someone I can't see, even laughing with him. He holds a Ph.D. in literature, taught college, had a single handicap golf game, and ate well. We never saw this coming. One day he went to type and jumbled letters came on the screen. He would show up late or early for his classes, wondering what was wrong with the students. He started running red lights. He was graciously counseled to retire, and he did, at 55. His doctor told him it was depression. The second opinion agreed. He was told to do nothing for a year, and he did. He played golf a bit, then one day he couldn't speak or think clearly. I came home from work to find him roaming the neighborhood, eyes ablaze, muttering to himself. I went on family leave. Many tests later we got the working diagnosis, but it meant nothing to him. He never reacted to the words Primary Progressive Aphasia or dementia. I was glad. If he was lucid, I knew what he would talk about doing. He told me after my dad's death that he did not want that life for himself.

I worry I may get it, too. It almost seems inescapable. Dementia has no cure, and the treatments for the symptoms are hit and miss. I thought about getting the full flight of predictive tests, but I know myself, and I scare myself into bracing for the worst. Others scare me, too, when I read their online statements about ending their lives if they learn they have it: I told my children to take me to a state where assisted suicide is legal; it's easy to overdose; I don't want to be a burden on my children. These are caregivers on social media forums. They live with the terror, eyes wide open. We have no children, but who would I burden? My sisters? My brother? Do I stay or do I go? This disease invites pandemonium. Assisted murder-suicides with caregiver spouses of those with dementia don't merit headlines, but their stories are on the sidebars. No thanks. I work on God's timeline.

There are no survivors – yet.

A diagnosis today would paralyze me and create melancholy for all who know me. I would second guess everything, I would read everything, I would cry, I would hardly live. I would be tempted to pick up that first drink after 20 plus years sober. I would even think about ending my life. It would be difficult not to consider. As a high school English teacher, I talk about suicide when I teach Hamlet. I tell the students suicide is a permanent solution to a temporary problem. Dementia isn't temporary. There are no survivors – yet.

I often think what my relatives would have done with an advance diagnosis. My grandmother was a classic worrier. She would have been beyond distraught. My father might have found that gun. My husband would have taken the right number of pills.

An advance diagnosis would paralyze me.

I appreciate the arguments for early diagnosis. Some people are made of sterner stuff. They have the mindset I lack. I admire so many who are contributing to the current conversation about dementia and are active advocates for a cure. They have found a purpose in their fate.

I don't need a test to get my ducks in a row. Loving those with dementia has prompted me to be prepared. I have a different type of bucket list: reset my priorities, slow down, be present, educate others, and make my legal plans. If and when it happens, there will be time for toast and tea and a walk along the shore. There will be time to plan for the inevitable and unenviable end. I am morbid enough to know I will recognize the purple elephant in the room. I don't want the shock and awe now. I can wait. My sisters agree. We will keep our elbows out.

Editor's Note: Consider the other side of the argument here.

A woman receives a mammogram, which can detect the presence of tumors in a patient's breast.

When a patient is diagnosed with early-stage breast cancer, having surgery to remove the tumor is considered the standard of care. But what happens when a patient can’t have surgery?

Whether it’s due to high blood pressure, advanced age, heart issues, or other reasons, some breast cancer patients don’t qualify for a lumpectomy—one of the most common treatment options for early-stage breast cancer. A lumpectomy surgically removes the tumor while keeping the patient’s breast intact, while a mastectomy removes the entire breast and nearby lymph nodes.

Fortunately, a new technique called cryoablation is now available for breast cancer patients who either aren’t candidates for surgery or don’t feel comfortable undergoing a surgical procedure. With cryoablation, doctors use an ultrasound or CT scan to locate any tumors inside the patient’s breast. They then insert small, needle-like probes into the patient's breast which create an “ice ball” that surrounds the tumor and kills the cancer cells.

Cryoablation has been used for decades to treat cancers of the kidneys and liver—but only in the past few years have doctors been able to use the procedure to treat breast cancer patients. And while clinical trials have shown that cryoablation works for tumors smaller than 1.5 centimeters, a recent clinical trial at Memorial Sloan Kettering Cancer Center in New York has shown that it can work for larger tumors, too.

In this study, doctors performed cryoablation on patients whose tumors were, on average, 2.5 centimeters. The cryoablation procedure lasted for about 30 minutes, and patients were able to go home on the same day following treatment. Doctors then followed up with the patients after 16 months. In the follow-up, doctors found the recurrence rate for tumors after using cryoablation was only 10 percent.

For patients who don’t qualify for surgery, radiation and hormonal therapy is typically used to treat tumors. However, said Yolanda Brice, M.D., an interventional radiologist at Memorial Sloan Kettering Cancer Center, “when treated with only radiation and hormonal therapy, the tumors will eventually return.” Cryotherapy, Brice said, could be a more effective way to treat cancer for patients who can’t have surgery.

“The fact that we only saw a 10 percent recurrence rate in our study is incredibly promising,” she said.

Urinary tract infections account for more than 8 million trips to the doctor each year.

Few things are more painful than a urinary tract infection (UTI). Common in men and women, these infections account for more than 8 million trips to the doctor each year and can cause an array of uncomfortable symptoms, from a burning feeling during urination to fever, vomiting, and chills. For an unlucky few, UTIs can be chronic—meaning that, despite treatment, they just keep coming back.

But new research, presented at the European Association of Urology (EAU) Congress in Paris this week, brings some hope to people who suffer from UTIs.

Clinicians from the Royal Berkshire Hospital presented the results of a long-term, nine-year clinical trial where 89 men and women who suffered from recurrent UTIs were given an oral vaccine called MV140, designed to prevent the infections. Every day for three months, the participants were given two sprays of the vaccine (flavored to taste like pineapple) and then followed over the course of nine years. Clinicians analyzed medical records and asked the study participants about symptoms to check whether any experienced UTIs or had any adverse reactions from taking the vaccine.

The results showed that across nine years, 48 of the participants (about 54%) remained completely infection-free. On average, the study participants remained infection free for 54.7 months—four and a half years.

“While we need to be pragmatic, this vaccine is a potential breakthrough in preventing UTIs and could offer a safe and effective alternative to conventional treatments,” said Gernot Bonita, Professor of Urology at the Alta Bro Medical Centre for Urology in Switzerland, who is also the EAU Chairman of Guidelines on Urological Infections.

The news comes as a relief not only for people who suffer chronic UTIs, but also to doctors who have seen an uptick in antibiotic-resistant UTIs in the past several years. Because UTIs usually require antibiotics, patients run the risk of developing a resistance to the antibiotics, making infections more difficult to treat. A preventative vaccine could mean less infections, less antibiotics, and less drug resistance overall.

“Many of our participants told us that having the vaccine restored their quality of life,” said Dr. Bob Yang, Consultant Urologist at the Royal Berkshire NHS Foundation Trust, who helped lead the research. “While we’re yet to look at the effect of this vaccine in different patient groups, this follow-up data suggests it could be a game-changer for UTI prevention if it’s offered widely, reducing the need for antibiotic treatments.”